Back to Projects

Pythonscikit-learnRandom ForestPandas

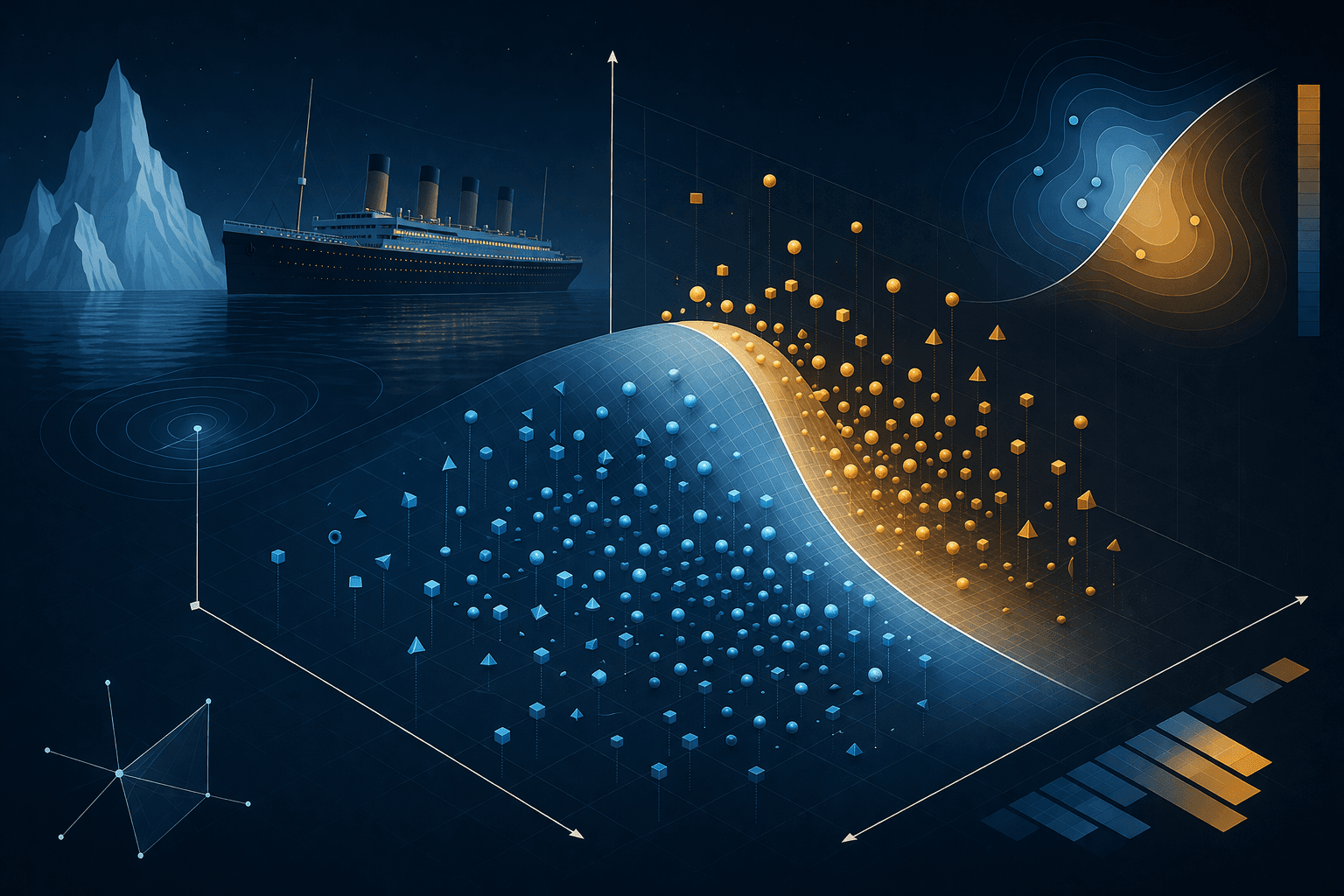

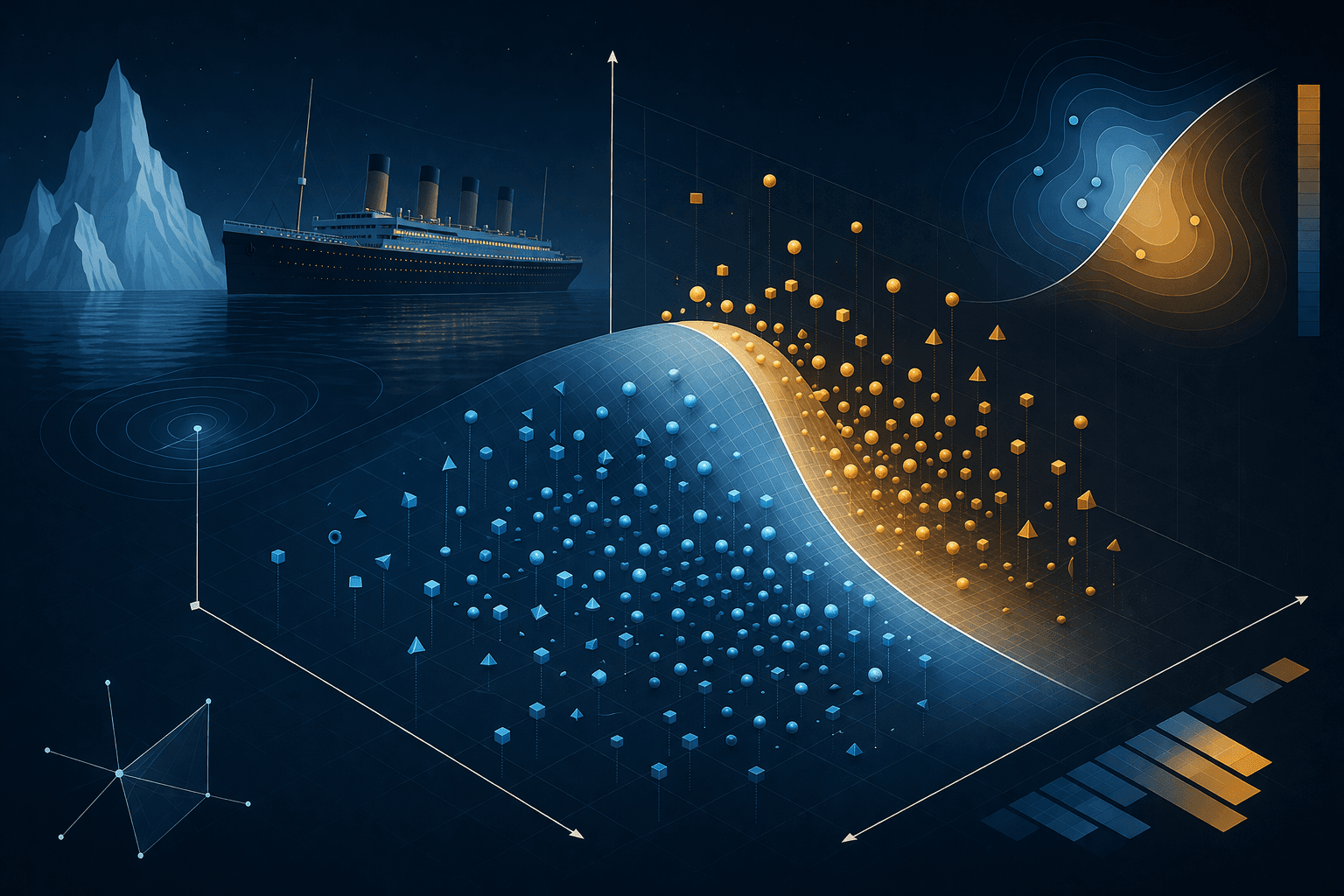

Kaggle — Titanic Survival Prediction

Compared logistic regression, decision trees, gradient boosting, XGBoost, and random forest on the classic Titanic dataset. Random Forest chosen for best bias-variance tradeoff — ~82% CV accuracy.

Year: 2025Category: Python

Project Overview

Work on the Kaggle Titanic competition with shared feature engineering and stratified 5-fold CV. Logistic regression, single trees, gradient boosting, and XGBoost were explored; Random Forest was kept as the main model for strong accuracy, stable folds, and clear feature importances. This portfolio entry links to the repo with the Random Forest pipeline; the README records the full model comparison and preprocessing.